Microsoft Azure Cosmos DB (formerly known by Document DB) is Microsoft’s globally distributed multi-model database service. It is purely Platform as a Service offering (meaning– you don’t need to spin up a VM for Cosmos DB). Microsoft started Project “Florence” in the year 2010 released as Document DB service with a vision of document-based database. Azure Storage Table offering is already providing a facility for storing the data in a key-value pair. After embracing open source technologies in Azure, Microsoft also started supporting NoSQL databases like MySQL, Cassandra, Mongo DB and Azure MySQL as well.

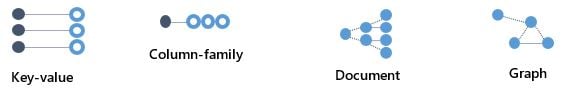

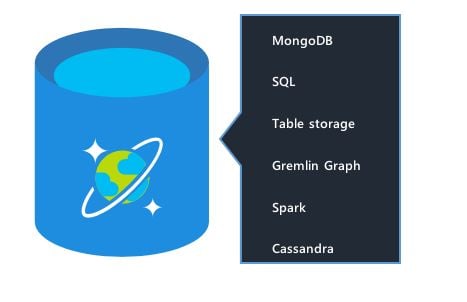

Azure Cosmos DB was launched during the year 2016-2017 with a vision of multi-model, globally scalable and turnkey global distribution. Following types of schemas/model of Cosmos DB is supported currently.

All the models can interact with the set of APIs made available from Azure Cosmos DB NuGet package to developers. Overall you can see Cosmos DB as a unified offering supporting multiple models from one service with the help of multiple API sets.

It provides transparent replication of your data across any number of Azure regions. You can elastically scale throughput and storage and take advantage of fast, single-digit-millisecond data < 10 ms.

How to create Azure Cosmos DB using Azure portal?

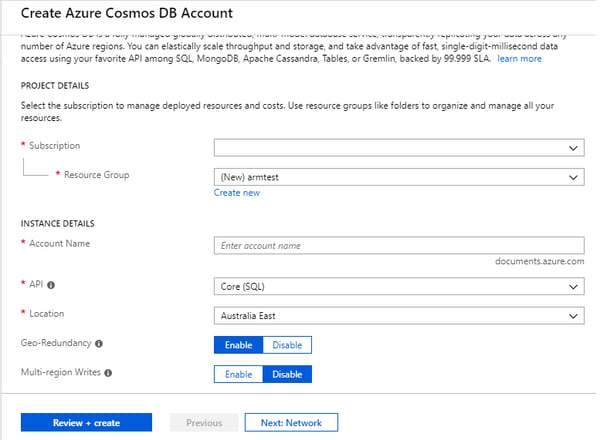

You can create a new instance of Azure Cosmos DB from Azure portal. You can go to Global Search bar and type “Azure Cosmos DB”.

You need to choose subscription, resource group and then give instance name as per the need. Note that the URL of the instance is <<Your Name>>.documents.azure.com. API drop-down allows you to select the API model as per your business need which can be Core SQL, Table API, Graph etc. By default, Geo-redundancy is enabled but you can disable and re-enable it again later (post provision). Same for multi-region writes, by default, it is disabled but with a single mouse click you can turn on multi-region writes post provision as well. You need to take a judgmental call to enable multi-region write based on the nature and structure of your application. You can also spin the instance in the existing VNET if you have already present. If you are spinning instance for demonstration purpose only, then you can ignore network section. Once you hit review + create you will see a new instance of Azure Cosmos DB getting spin for you. This entire activity can be done programmatically as well with the APIs. If you choose SQL API then you can also fire SQL queries on your schema/document collection.

To access Azure Cosmos DB from your code, you can add Cosmos DB endpoint and key in your app.config as shown below–

<appSettings>

<!--Add Cosmos DB Endpoint URL-->

<add key="CosmoEndpoint" value="https://xyz.documents.azure.com:443/"/>

<!--Add Primary Key-->

<add key="CosmoMasterKey" value ="YourKey"/>

</appSettings>

You can call this connection from your main function or any module like an example shown below –

Task.Run(async () =>

{

var endpoint = ConfigurationSettings.AppSettings["CosmoEndpoint"];

var masterKey = ConfigurationSettings.AppSettings["CosmoMasterKey"];

using (var client = new DocumentClient(new Uri(endpoint), masterKey))

{

Console.WriteLine("\r\n-- Querying Document (JSON) --");

var databaseDefinition = "<Your Schema Name>";

var collectionDefinition = "<Your Collection Name>";

var document = new FeedOptions { EnableCrossPartitionQuery = true };

var response = client.CreateDocumentQuery(UriFactory.CreateDocumentCollectionUri(databaseDefinition, collectionDefinition),

"select * from c", document).ToList();

foreach (var item in response)

{

Console.WriteLine(item);

Console.WriteLine("\r\n");

}

Console.ReadKey();

}

}).Wait();

Request Units (RU) is a rate-based currency. This abstracts physical resources for performing requests.

1 RU = 1 Read of 1 KB Document

Reads under < 10 ms and indexed writes < 10 ms at the 99th percentile, within the same Azure region. Customers can elastically scale throughput of a container by programmatically provisioning RU/s (and/or RU/m) on a container. Elastically scaling throughput using horizontally partitioning of resources requires each resource partition to deliver the portion of the overall throughput for a given budget of system resources.

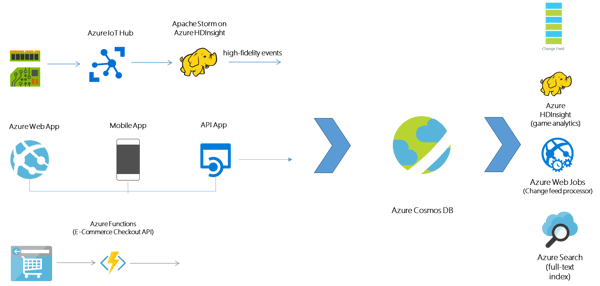

Scenarios where Azure Cosmos DB can be used

There are plenty of scenarios where Azure Cosmos DB can be used, below is the diagrammatic representation of some of the use cases:

- IoT devices sending data and high-fidelity events stored in Cosmos DB

- App services connected to Azure Cosmos DB (Web App, Mobile App and API App)

- Session persistence in e-commerce type of apps

Moving from Azure Table Storage to Azure Cosmos DB and Migration Tool

The advantage of moving from Azure Table Storage to Azure Cosmos DB is that you naturally get all the amazing features and SLAs of Azure Cosmos DB. Also, it is easy to access and query the data. Practically you don’t need to do much of the code changes since the way to access (even from API code level point of view) is similar to Azure Storage. So, you can get the key-value storage benefit of Azure Table Storage plus the turnkey scalability, geo-replication and multi-write in a single digit and other SLAs at a low price. Following is the quick sample which showcases how the code is similar to Azure Table Storage if you want to access the same set of schemas from Azure Cosmos DB.

using Microsoft.Azure.Storage;

using Microsoft.Azure.CosmosDB.Table;

var connectionString = "DefaultEndpointsProtocol=https;AccountName=<<Your Name>>;AccountKey=<<YourKey>>;TableEndpoint=https://yourname.table.cosmosdb.azure.com:443/;";

CloudStorageAccount storageAccount = CloudStorageAccount.Parse(connectionString);

CloudTableClient tableClient = storageAccount.CreateCloudTableClient();

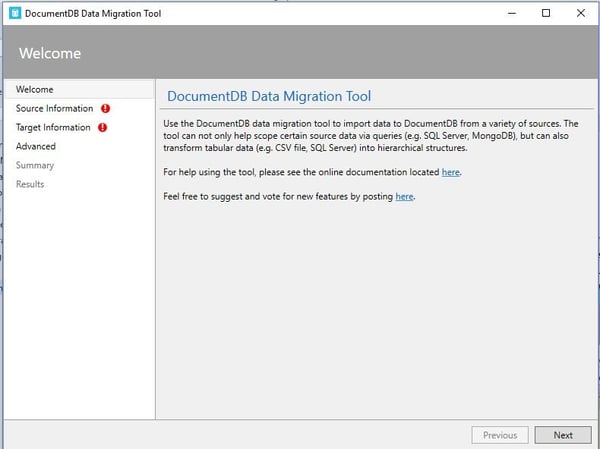

Migration Tool

Microsoft provides Document DB data migration tool which allows you to seamlessly migrate the following data sources to Azure Cosmos DB multi-model:

- JSON files

- MongoDB

- MongoDB Export files

- SQL Server

- CSV files

- Azure Table storage

- Amazon DynamoDB

- Blob

- Azure Cosmos DB collections

- HBase

- Azure Cosmos DB bulk import

- Azure Cosmos DB sequential record import

Key highlights of Azure Cosmos DB

- Linearizability (once the operation is complete, it will be visible to all)

- 99.99% availability and low latency

- Predictable consistency for a session, high read throughput plus low latency

- Reads will never see out of order writes (no gaps)

- Potential for out of order reads

- Lowest cost for reads of all consistency levels

Azure Cosmos DB started as “Project Florence” in late 2010, which eventually grew into Azure Document DB is the first and only globally distributed database service today which offers comprehensive Service Level Agreements (SLAs) accounting multiple factors like throughput, latency at the 99th percentile, availability, and consistency. Azure Cosmos DB is built upon the solid backbone of “Service Fabric” technology which is a great distributed systems infrastructure.

%20in%20an%20Application-190822.png)