The data export process can broadly be divided into 2 steps:

Step 1: Export the data from SQL Server to Azure Blob

Step 2: Bulk load the data from Azure Blob to Snowflake using the COPY command

Step 1: Export the data from SQL Server to Azure Blob

Configure SQL Server Management Studio to export data to Azure blob storage

The Export wizard provided by SQL Server Management Studio can be used to export the SQL Server tables. SSMS by default doesn’t provide the option to use Azure blob as the destination for the exported data.

To use the Azure Blob as the destination you need to install the Microsoft SQL Server Integration Services Feature pack for Azure.

Export the data to Azure blob in Avro format

- Create a blob container on Azure where the exported files will be stored.

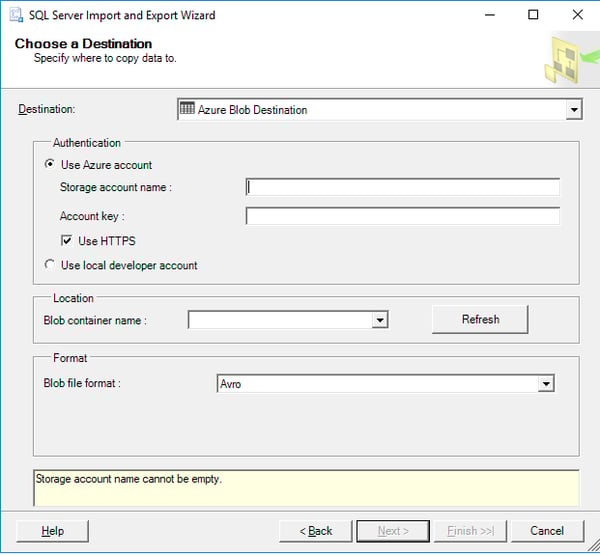

- Select Azure Blob Destination as the destination for the export in SSMS.

- Provide the Storage account name and account key for connecting to Azure Blob

- Choose the blob container as the export location.

- Choose AVRO as the blob file format.

If data is exported in delimited files there can be challenges around the delimited field being present in the data, NULL data values being exported as empty fields etc.

Snowflake COPY command provides support for AVRO files, so it is recommended to export the data in AVRO format to avoid these challenges. - Select the table to be exported or write a custom query to export the data.

Step 2: Bulk load the data from Azure to Snowflake using the COPY command

Once the data is exported to Azure blob, the data can be copied from Azure blob to Snowflake using the COPY command. Below are the steps to use the COPY command

- Create the corresponding tables in the Snowflake database

- Create a file format that uses all the default AVRO format options:

CREATE OR REPLACE FILE FORMAT my_avro_format TYPE = AVRO; - Create an external stage:

CREATE OR REPLACE STAGE my_azure_stage

URL='azure://myaccount.blob.core.windows.net/mycontainer/load/files'

CREDENTIALS=(AZURE_SAS_TOKEN='MY SAS token')FILE_FORMAT = my_avro_format; - Copy the data from Azure stage to Snowflake using the Avro parsing capabilities provided by Snowflake:

COPY INTO mytable

FROM (select

$1:column1,

$1:column2,

…

$1:columnN

From @my_azure_stage) FILE_FORMAT = (FORMAT_NAME = my_avro_format);

Recommendations

- Use AVRO as the file format for the Azure blob to avoid data problems during import.

- The performance of other queries may be impacted if the volume of data is too large. To avoid any impact of production queries, it is better to create a backup of the database on a separate machine and use this backup for the export.

- Use nolock while exporting the data. This will avoid lock on the tables while the data is being exported and will also result in faster export of the data.

- Install SSMS either on the same machine which has the SQL Server instance or on a machine which is in the same network of the SQL Server.

- If the data volume is too large, export data in batches using custom SQL queries.